SARAS is an interdisciplinary organization focusing on autonomous system safety, reliability, and security. We aim to help all stakeholders design, build, operate, and certify safe and reliable autonomous systems. We help governments, agencies, manufacturers, and other researchers in creating frameworks under which reliable and safe autonomous systems are designed and operated.

SARAS focuses on multiple aspects of Safety and Reliability of Autonomous Systems. We develop research, solutions, and products for a safer future.

Human-System Interaction

Most Autonomous Systems are deployed in a “mixed” environment, in which they will interact with non-autonomous systems. Autonomous cars will share the streets with human-driven cars, and/or with pedestrians and bicycles; autonomous ships may encounter non-autonomous ships during their voyage; drones may share space with human-driven aircraft. Autonomous Systems may also depend on humans for operation, such as an onboard safety driver who should take over control when needed, and/or remote operators. Humans will also interact with Autonomous Systems as users, such as in automated cars operating as Mobility-as-a-Service or autonomous passenger ferries. Finally, these systems will interact with society in a broader sense, which involves societal acceptance and trust.

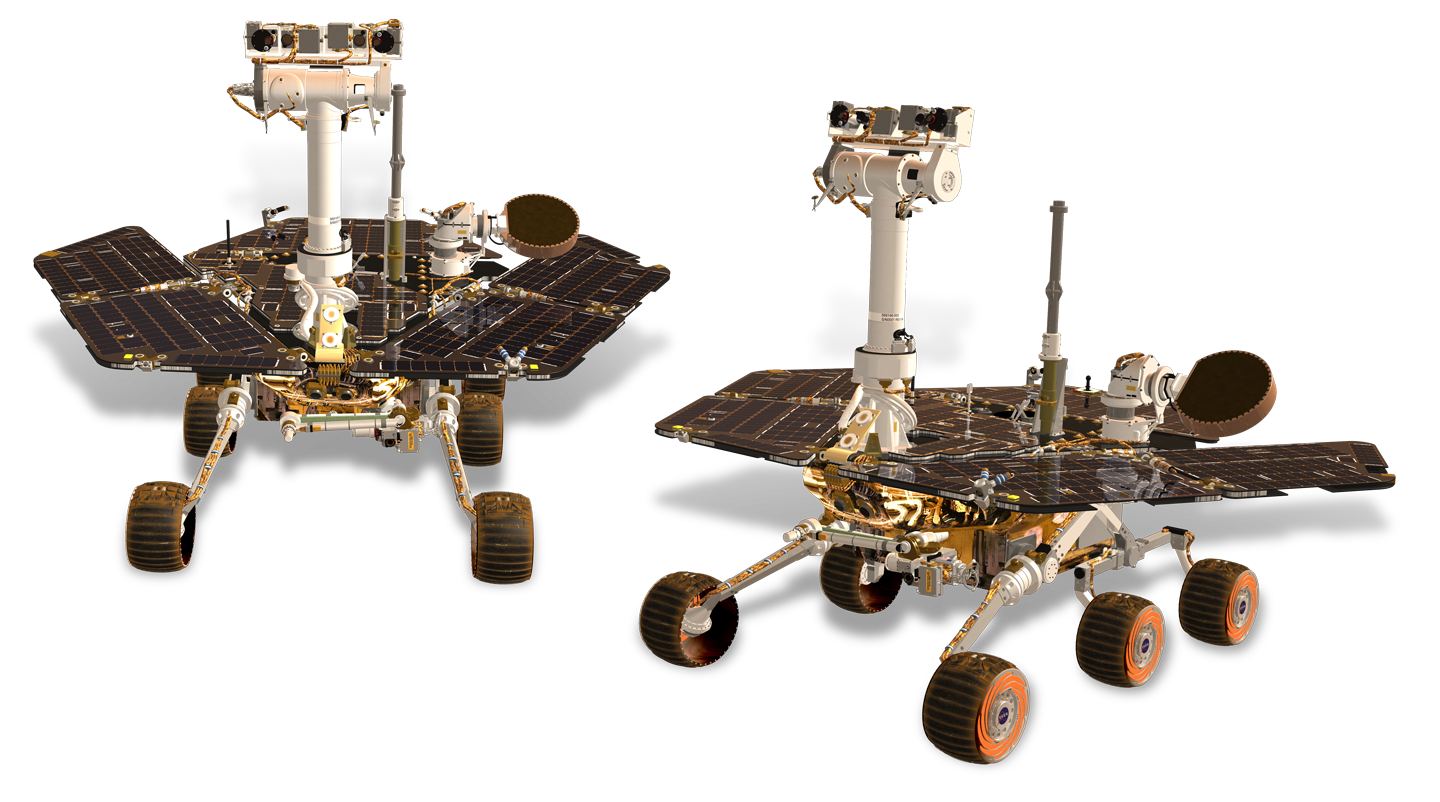

Hardware and Software Reliability

Equipment and tools of Uncrewed Autonomous Systems may need to have higher reliability over the time of their mission compared with their crewed counterparts. For instance, many repairs and maintenance of equipment onboard vessels are nowadays performed by the crew. Uncrewed vessels thus need to have a high likelihood of completing several missions before the vessel is returned to a dock with the required maintenance facilities and crew, similar to the concept of Maintenance Free Operating Periods proposed by the aeronautical industry. Autonomous Systems can also include some form of self-repair. Additionally to hardware reliability, software reliability is of critical importance for Autonomous Systems.

Risk Management and Risk-Informed Decision Making

Risk can be assessed - and managed - from different perspectives, e.g. functional risk, operational safety risk, enterprise risk. Risk assessments and modeling are necessary processes in the development of Autonomous Systems for comparing designs, informing risks to regulators and society, and developing risk mitigation strategies. Autonomous Systems include new hazardous events and complexities that may require new risk frameworks. Moreover, the connected aspect of the systems and data availability allows for applying online dynamic risk models. These models can assist in decision-making during operations, informing operators or software about the safer course of action in real-time.

Legal and Regulatory Aspects

Autonomous Systems pose legal and regulatory challenges. Regulators face the challenge of developing or adapting existing regulations to accommodate autonomous and semi-autonomous systems, and to keep up with the pace of technology development. The legal and regulatory challenges range from assessing the risks of Autonomous Systems’ operational designs (e.g. having a driver onboard or being constantly monitored remotely) to developing and enforcing novel procedures for systems’ operations, and the definition of liability aspects should an incident occur.

Operational Safety for Level 4 Automated Driving System Fleets - Funded by USDOT National Highway Traffic Safety Administration.

Project in cooperation with Transportation Research Center Inc. (TRC) and ToXcel. The project aims to identify safety risks associated with Level 4 ADS Mobility as a Service (MaaS) operations and the fleet operator's responsibilities and activities to mitigate such risks.

To identify the operators’ operational safety responsibilities and propose risk mitigation actions, the team will perform a risk assessment of ADS MaaS operations. The project tasks include: i) conducting hazard identification of Level 4 ADS MaaS operations, ii) performing risk assessment, iii) identifying fleet operators’ operational safety responsibilities, iv) proposing activities that could help fleets mitigate risks and achieve operational safety responsibilities, v) evaluating the mitigation activities.

Concurrent Task Analysis for Autonomous Systems Safety

This project concerns the development and extension of Concurrent Task Analysis (CoTA) for modeling of human, hardware, and software tasks during autonomous systems’ operation. Application for hazard identification, procedures development, failure propagation identification, and others.

The COTA was initially developed in the context of Maritime Autonomous Surface Vessels (MaSS). It has been applied to Autonomous Remotely Operated Vehicles (AROVs). Current developments include extensions and formalization of task types and application to Autonomous Driving Systems and Autonomous Ferries operations.

International Workshop on Autonomous Systems Safety

The International Workshop for Autonomous System Safety (IWASS) is a joint effort by the B. John Garrick Institute for the Risk Sciences at the University of California Los Angeles (UCLA) and the Norwegian University of Science and Technology (NTNU).

IWASS is an invitation-only event that gathers key experts in autonomous systems safety from academia, industry, and regulatory agencies. IWASS aims to identify common challenges related to safety, reliability, and security (SRS) of autonomous systems, covering autonomous maritime, marine, land vehicles and aerospace systems, and to discuss and propose possible solutions for the identified challenges.

Risk Assessment for Operational Safety of Autonomous Truck Mounted Attenuator (ATMA)- Funded by Autonomous Maintenance Technology (AMT) Pooled Fund Study

The AMT pool fund program has recently funded a tabletop analysis of different types of crash scenarios and the subsequent actions by different stakeholders. However, ATMA deployment risks are more than the ones during and after the crash. It is also critical to understand the potential major operational safety risks of ATMA deployment, before the crashes occur, and it is equally or even more important to identify countermeasures to prevent those crashes from happening. Identified and quantified risks and their impacts can further guide DOTs to prioritize these risks and work with DOT engineers to deploy corresponding countermeasures to ensure safety during ATMA deployment and generate additional product requirements.

SARAS applies a multidisciplinary approach for assessing Autonomous Systems safety and reliability. We have large expertise in developing and applying risk and reliability methods to multiple industries, stakeholders, and applications purposes. Our expertise includes, but is not limited to:

-

Development and application of risk assessment methods

Event Sequence Diagrams (ESD)

Event Tree Analysis (ETA)

Failure Mode and Effect Analysis (FMEA)

Hazard and Operability Analysis (HAZOP)

Fault Tree Analysis (FTA)

Bayesian Networks

Hybrid-Causal Logic Modeling (HCL)

Probabilistic Risk Assessment (PRA)

Dynamic Risk Assessment (DRA)

Quantitative Risk Analysis (QRA)

-

Development of Web-Apps and Desktop-based software for risk analysis, risk management, and risk-informed decision making.

-

Development and Application of methods for data acquisition and processing for evaluation of autonomous systems operations under a risk perspective.

Machine Learning

Natural Language Processing

Deep Learning

-

Advanced models for PHM of highly automated systems for real-time data treatment and online decision-support

-

Development and application of methods for modeling and assessing human-system interaction in highly automated and autonomous systems design, operation, and maintenance.

Static and dynamic Human Reliability Analysis methods

Organizational Factors

Human-system interaction in automated and autonomous systems

Task-switching scenarios

Task Analysis

Human Factors Engineering

We're expanding our team! Join us

For partnerships, research collaborations, and general inquiries please contact Dr. Marilia Ramos at MARILIA@RISKSCIENCES.UCLA.EDU

We look forward to hearing from you.